AI tools can transform your compliance workflow—but only if used responsibly. Based on our research into global regulatory guidance, here are the key principles every compliance professional should follow.

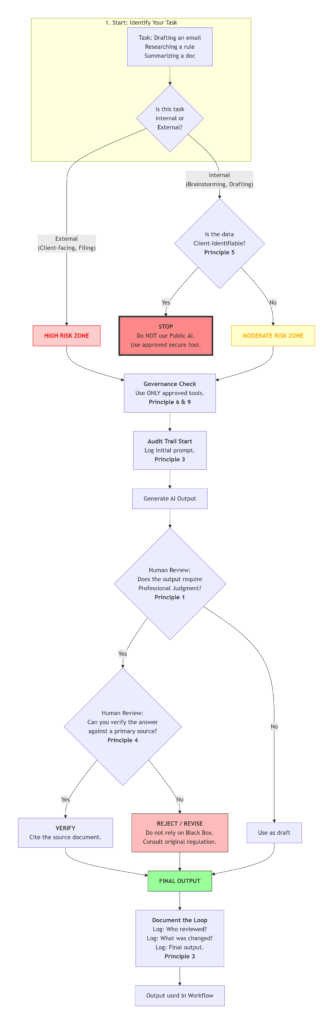

1. Always Keep a Human in the Loop

This is the single most important principle across all regulatory guidance. AI is a powerful assistant, but it should never replace professional judgment.

Regulators including FINRA and the EU AI Act explicitly require human oversight for any AI-assisted decision-making that could impact customers or compliance outcomes. AI models can produce incorrect information with high confidence—a phenomenon called “hallucination.”

Implement mandatory human review for all AI-generated outputs before they are used in client-facing communications, compliance decisions, or regulatory filings. Document who reviewed what and when.

2. Implement Risk-Based Governance

Not all AI use carries the same level of risk. Your oversight should be proportionate to the potential impact.

Using AI to brainstorm internal ideas carries minimal risk. Using AI to draft customer communications or determine compliance obligations carries significant risk. Treating all uses the same leads either to unnecessary friction or dangerous gaps.

Classify AI use cases into three tiers:

- Low risk (internal brainstorming): Minimal oversight needed

- Moderate risk (research, summarization): Human review required

- High risk (customer-facing, compliance decisions): Formal approval and full documentation required

Document your classification framework in your compliance manual.

3. Maintain Comprehensive Audit Trails

Regulators expect traceability. If asked how you reached a compliance decision, “the AI told me” is not an acceptable answer.

Under the EU AI Act and evolving guidance from financial regulators, firms must be able to demonstrate how AI-assisted decisions were made. This includes knowing what prompts were used, what outputs were generated, and who reviewed them.

Log all prompts and outputs when AI is used for compliance work. Maintain version history of AI-generated content. Keep records of who reviewed and approved AI-assisted decisions. Your audit trail is your defense.

4. Address the “Black Box” Problem

AI models can produce authoritative-sounding answers that are completely wrong. They can also embed hidden biases from their training data.

The SFC and other regulators emphasize that firms must understand the limitations of the AI tools they use. If you cannot explain how a tool reached a conclusion, you should not rely on it for high-stakes decisions.

Always verify AI outputs against primary sources—actual regulations, not just summaries. For high-stakes compliance work, ensure every AI-generated statement can be traced back to a verifiable source. Use tools that provide citations and source references.

5. Protect Confidential Data

When you paste client information into a public AI tool, you may be sharing confidential data with third parties who can use it for model training.

Data privacy laws including GDPR and Hong Kong’s PDPO require firms to protect client confidentiality. Consumer-grade AI tools often retain and learn from inputs, creating potential data breaches.

Never input client-identifiable information into public AI tools. Ensure your approved AI tools have appropriate data protection safeguards, including data isolation and commitments not to use your data for training. Review vendor privacy policies carefully.

6. Address “Shadow AI”

Your employees are already using AI tools—whether you’ve approved them or not. Ignoring this creates significant compliance risk.

When employees use unauthorized tools, you lose visibility into what data is being shared and how decisions are being made. The solution isn’t just prohibition—it’s providing better approved alternatives.

Survey your team to understand what AI tools they’re currently using. Develop clear policies on approved tools and prohibited uses. Provide training on the risks of unauthorized AI.

7. Ensure Ongoing Validity and Reliability

An AI tool that worked perfectly last month may produce incorrect outputs today. Regulations change, and AI models update.

The IPC-OHRC Principles emphasize that AI systems must be demonstrably reliable throughout their lifecycle. A tool validated at deployment may drift over time as underlying models change.

Regularly test your AI tools against current regulatory requirements. Schedule quarterly reviews to verify accuracy. Document evidence that the AI continues to meet requirements for its intended use. If a tool updates, re-validate it.

8. Update Your Written Supervisory Procedures

If your compliance policies don’t mention AI, they’re already outdated. Regulators expect firms to adapt their frameworks to address how AI is being used.

FINRA explicitly requires firms to update their Written Supervisory Procedures to account for AI use e.g., who may use AI, for what purposes, and how outputs must be reviewed.

Review and update your compliance manual to address:

- Who may use AI tools and for what purposes

- What data can be used with AI systems

- How outputs must be reviewed and approved

- Recordkeeping requirements for AI-assisted work

- Consequences for policy violations

9. Manage Third-Party AI Risk

If you use AI vendors, you’re still responsible for compliance. Outsourcing the tool does not outsource the accountability.

Regulators expect the same level of oversight for AI vendors as for any critical service provider. You need to understand how their models work, where your data goes, and what controls they have in place.

Conduct due diligence on AI vendors before engagement. Update contracts to address AI usage, data rights, and security controls. Review vendor SOC reports or equivalent independently. Monitor vendor performance and controls on an ongoing basis.

10. Stay Updated on Regulatory Expectations

AI regulation is moving from principles to enforcement. What was acceptable guidance last year may be a compliance gap today.

The EU AI Act provides a benchmark that influences regulators globally. The SFC, MAS, and other Asian regulators are actively monitoring international developments and adapting their requirements.

Monitor guidance from relevant regulators (SFC, FINRA, ESMA, MAS). Subscribe to regulatory updates. Join industry working groups on AI governance. Treat your AI governance as a living program, not a one-time exercise. Update your policies as requirements evolve.

Disclaimer:

About this Resource

This guide is based on our ongoing research into global AI regulatory developments. It is intended for informational purposes and should not be construed as legal advice. Regulations and AI guidance are subject to change. You should consult with qualified legal counsel regarding your specific circumstances.

Last updated: February 2026 | Reglexa | reglexa.com | All rights reserved. | Privacy Policy

Want a Printable Version?

Download our free ten-point checklist:

“10 ESSENTIAL TIPS FOR USING AI IN COMPLIANCE WORK”

Enter your email below and we’ll send it right away.

Create Account

Create Account